How many of them would be as good as they are if they didn’t have the opportunity to compete at the highest levels over and over, honing their skills and overcoming their nerves?

I think by looking at certain individuals the issue is bring clouded.

The likes of Peter will never fit into any ranking system.

I was more interested in the current mid range players where the location and numbet of comos entered have a bigger impact than "skill.

I know all of the UK players very well. The currently ranked no. 2 in the UK has an inferior head to head comparison against EVERY other UK player in the top 10 (and many below that as well). Surely that shows a major flaw in the current system. These aren’t players who don’t compete often it don’t travel.

I can point out other players in the US who have a similar profile. A certain player ranked around 170 has entered pushing 200 comps, won just 2 and had an inferior head to head against well over 200 people. Again showing you can work your way up the rankings based purely on brute force, rather than skill.

That is what the current system is. My new system was designed to show relative skill.

Players may not play against each other but with the degrees of separation argument you can compare everyone in this way.

I’d it perfect? No, does it more accurately reflect relative skill? IMHO yes.

There will be flaws with any system, and some people will do what it takes to climb the rankings to boost their own ego (you’ve only got to look at the emergence of a new tournament format every time that there’s a rule change so people can maximise their WPPR points) . So what?

I think the vast majority of people who enter comps do so because they love playing pinball, not because they can move up a ranking system which nobody outside of the community gives two hoots about.

If the IFPA was to disband tomorrow would all competition stop without WPPR points?

The idea was to try and get a more balanced skill/based ranking system rather than the current achievement based system which has flaws which have already been mentioned.

I understand the idea. Here is my 2 cents:

When I first got into competitive pinball in 2001, I played 1 event a year (PAPA - which was called Pinburgh at the time), and participated in a team league (which really was a team league - no way to parse out an individual’s performance). I didn’t travel, because I wasn’t “good enough” and didn’t see the point. From then until 2010, I played in my 1 yearly event, added an individual league, and the team league was disbanded. I traveled once in that time, to Akron for a show and played in some tournament where we all qualified on the same machine (there was a bank of Godzillas). I also regularly played heads up matches utilizing the old Coinball website.

That was the extent of the competitive pinball available to me locally, and my location was Pittsburgh. I had never heard of IFPA up until 2010. In 2010 I left for Hawaii and didn’t return to the Burgh until 2013. My 3 year hiatus meant I was now among the unranked pinball players since I couldn’t find a working machine on the island, let alone a competitive scene. Now there were local events (one-offs) and multiple locations, leagues and everyone was pretty good!

Now IFPA was big. Everyone knew about it. People were talking about their rankings. People understood how it worked. The way I understand it looking back is “Every annual event had a base value of 25 points. If a location had more than 1 event a year, the # of events acted as a divisor on the WPPR value,” but maybe that is too simplistic. This system made the middle rankings inaccurate because events were different yet all events got the base 25 and the base 25 was an enormous boost to the winner. This system also had a chilling effect on the number of events because TDs didn’t want to degrade their own WPPR values by hosting another tourney. The next iteration of WPPR rankings addressed both issues, by allowing each event to stand on its own and doing away with the base 25.

Since then the scene has exploded. We can directly attribute much of this to the IFPA. Here’s the rub, though - IFPA rankings don’t really matter. Outside of the players that get into the IFPA World Championships based on the at large bids, your general ranking has no effect on anything. (The SCS is different because your ranking doesn’t matter to the SCS, just the points you earn in a calendar year). I believe there are no tournaments other than the IFPA World Championships that restrict entry into the highest division on anything other than performance in that tournament. So while it is nice to accurately rank people, at the end of the day, it doesn’t matter because if you play well enough at [Insert Tournament Name] you can win first place in the top division.

So why does any of that matter? Because if what you are proposing is taking the weekly tournament that X doesn’t mind having a few drinks while playing and counting that against X - since it affects their rating or their eff%, maybe they won’t play it. But, as I stated in an earlier post, maybe the $1 fee will cause that tourney to fall off anyhow. And, I don’t think you can go back in time and take non-pertinent data (at the time) to change people’s rankings (I mean, IFPA can do whatever they want, but I mean for the sake of this conversation). People participated in events with the understanding that their top 20 scores would be used to rank them. They didn’t avoid events with formats that might be harmful to their ‘rating’ or ‘eff%.’ But they might have if they knew those things mattered.

It is obvious that people care about their ranking. Just having the ranking out there has influenced TD decisions on everything from format to the # of tournies at a location per year. It has also greatly influenced player participation and willingness to travel outside their locale. The funny thing is how little it affects 99.9% of us, myself included (since I didn’t qualify for IFPA Worlds)

You touch on an interesting point here. Please bear with me, it will take a few sentences…

I used to play as a teenager, and then not at all for about 35 years. About 15 months ago, I stumbled into a pinball tournament pretty much by accident, and then discovered that there is actually such a thing as a pinball world championship. As a 57-year old, there is no way I would justify to myself spending hours and hours each week playing pinball just for the sake of it. It would be too self-indulgent, and my wife would probably think of it as something akin to a drug addition.

But, seeing there actually is a world championship, this casts the whole thing in an entirely different light: “No, I’m not goofing off, I’m diligently practicing for my next tournament. This is serious! I need to make the next bracket in the rankings to get into B division!” And my wife suddenly looks at it as her husband having an enjoyable competitive hobby and a sense of purpose, instead of (not) dealing with depression by spending endless and mindless hours in front of pinball machines… ![]()

The mere fact that there is a “World Pinball Player Ranking” imbues the whole thing with a sense of gravity and importance that wouldn’t exist if those WPPR points weren’t there. The points add a new level of meaning all on their own: “It’s a sport, not a pastime!”

So, yes, there are people who care about their ranking. It matters to them, rightly or wrongly.

The IFPA rankings allow anyone in the world to look up any player with an IFPA number to see “how well or how poorly” that player is doing. By publishing this list, the IFPA has, intentionally or unintentionally, created a public perception that being at position 99 on that list means that the corresponding player is currently the 99th-best player in the world. Why? Because that is how every other sports ranking list across the known universe works. (All the recent TV coverage of various pinball players I have seen has taken great care to mention that “player X is currently the Y-th highest ranked player in the world.”)

So, when people look at the list, they expect it to accurately reflect the notion of “best”. I believe that is inevitable, no matter how much explaining the IFPA does to show otherwise, or whether the intent of the list is or isn’t to reflect skill level. Read my lips: anyone who isn’t intimately familiar with how the rankings are computed will automatically and instinctively assume that the list reflects skill level.

I see this even among fellow competitive players. They think that the list reflects skill, and they measure their sense of achievement by their position on the list. To me, that alone is sufficient reason to try and make the rankings reflect skill level as accurately as is possible.

Or stop publishing the list altogether. No more rankings, no more problems…

This is the “ranking” system used on the UK Pinball League website. It is based purely on points earned in the different regional league meetings, with depreciation over each year.

You’ll notice how many people on that list don’t appear in the IFPA rankings, and others in a vastly different order, as it is an achievement based system.

I think we can all agree that would be quite foolish

Now that the IFPA site is back up we can see that if AUS players think Peter is under-ranked and care, they should start running a max TGP tournament at the monthly he wins every month. It is trending towards a 50 person monthly (still too many unranked players). If the TD fixes the TGP Peter would be top 30 in a year or 2. No need to travel. Looks like a good active scene. There is a big gap between the 500 WPPR players and the 1000 WPPR players. To get to 1000 is hard.

I think the system is working great. One of my local friends decided to drive to BPSO to collect the fraction of a point he has a chance to get so he can watch himself move. One of my leagues is definitely helped attendance wise by the system. PCS makes me play more, WPPR makes me travel more.

Once again, as others have pointed out: the lifetime head-to-head record is interesting, but not an accurate reflection of recent history. The IFPA system and many (all?) sports individual rankings has the element of time that gives more weight to recent performance vs older performance.

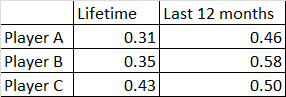

In the case of your comparison among the UK players, the shift is quite dramatic. Current UK #2 has a very low lifetime “win” record against the three most often faced players. However, quickly tallying the results from the last 12 months, the records are all closer to 50/50:

Lifetime Last 12 months

Lifetime Last 12 months

Player A 0.31 0.46

Player B 0.35 0.58

Player C 0.43 0.50

That’s because there’s much fewer data points to compare.

Lifetime is no real indicator, as it doesn’t take into account player improvement, or worsening.

That’s why I did it for 18 months as well.

Just maxing out TGP would not give the same amount of WPPR points as a comp in US based purely on the ratings of the players playing in the US comp due to the way WPPR points are currently allocated.

Not at first, but would get better over time. Once you get those 30-50 people past the 5 tourney threshold and get 100% TGP, points will go way up.

Can you provide examples of what these super overvalued tournament are? What are the new superleagues? I really don’t see it. SFPD is worth 40 points, twice a year. Plus you need to beat Andrei if you wants those. NEPL is 3 times a year and is worth 43 points, but you need to beat Suppressed Player to win it. Is Peter in that level, maybe, I don’t know. Sunshine monthly league is worth 23, so comparable to the monthly in AUS if it was max TGP.

There are bigger tournaments in the US. You can travel to a multi-day event almost every weekend. But playing in those environments is a different beast. Plus there is huge work by those organizers to put those on. These organizers get rewarded by having their events worth more, which draws more people and in turn makes their events worth more.

I look at Rating and Eff % quite frequently when getting into discussions that involve comparing players, particularly when the number of events played is imbalanced.

Players who don’t play often can just sort the list by one of those other metrics, and then brag about their newly improved ranking.

There are three Top 50ish players in the Denver area. That doesn’t include Walt Wood, who you’ve never heard of. 51st in Eff % and 113th in Rating. He’s only played in 22 events in the last three years and yet lives a few miles from a venue that now has a monthly worth 25 WPPRs (which he now often can’t play in because he works at the bar).

Should he be higher than 989th? I would argue not, because he barely plays and IFPA ranking should tell me who is doing the best right now, at the highest levels of competition. When he does show up, I tell people he’s probably the best player in the room, and cross my fingers that I don’t have to play against him.

Everyone has their own reasons for not being ranked higher. I don’t know what Walt’s are, but if he wants to brag about how good he is, he should probably ignore IFPA Ranking.

That had me LOL.

I literally just clicked on the first tournament I found (in which the highest rated ‘UK’ person who lives in America had entered)

Ohio Pinball Wizard

Ranking Strength 14.40

Ratings Strength 8.86

Base value 32

Rated players 79

TGP100%

Tournament Value 55.27

Now compare that to the largest comp in the UK (UK Pinball League)

Ranking Strength 4.45

Ratings Strength 6.65

Base Value 32

Rated players 163

TGP 100%

Tournament Value 43.1

So over double the amount of players in the comp which lasts a full year, includes all of the best players in the UK and is still worth less than a comp ran in the US due to the way the WPPR points are allocated.

The US players get higher WPPR pts due to the tournament value, which in turn increases the tournament value of the next one they enter etc. etc. etc, and they’re not insignificant points.

It’s not simply about maxing out competitors and TGP.

Then multiply this by just 10/20 competitions a year and it creates an ever widening gap, which cannot be bridged competing outside of the US.

I travelled to a competition in France, the closest possible, so I could still drive, but still needed a Friday-Monday trip.

There were 59 competitors, although only 30 officially ranked. 14 of the 29 unranked players finished above Franck Bona ranked 36th in the world, so they can’t just be discounted as insignificant because they haven’t played 5 comps. 3 of them finished in the top 10.

I won and got 5.90 pts. I would only have had to finished 23rd in the Ohio comp to get the same WPPR points.

It’s a completely separate bubble the US competes in, which it appears many don’t understand still.

Getting back to my original two statements

How many people agree with them both?

0-3 on adding to the “Banners in the rafters” system

This also had me LOL.

Because a group of players takes the IFPA Rankings as gospel for who the “Most skilled players are”, it’s the IFPA’s responsibility to change their system to be a SKILL BASED SYSTEM . . . or the other option is to kill the system altogether?

The other option, like most other sports ranking systems is to live with the consequences of what your ranking metrics are, knowing that there’s always a group of people that won’t be accurately reflected in the system.

I’m all for the TrueRank style analysis that Wayne brought up. I wouldn’t have created a spreadsheet of my own years ago that coincidentally matches the same kind of investigating that Wayne is pursuing. I think head-to-head records against just that top tier of player could be a valuable metric on it’s own. What that doesn’t do however is accurately rank EVERYONE in the system. This metric may dial in the top 250 better, but if you stretch this concept to the top 10,000, it falls apart.

I can see a path where the IFPA releases the “POWER 100”, based on the winning percentage of the top 250 WPPR players against eachother, but outside of that ego boost for whoever would jump up into the “POWER” ranking, I can’t see shifting our overall system that captures ALL PLAYERS into this kind of model.

The promotional tool of the WPPR system is the biggest part of what keeps people interested. The biggest satisfaction that I’ve seen is the movement a player sees when they start playing. Every time they play, they move up, full stop. The fact it’s a POSITIVE ONLY SYSTEM is BY DESIGN. It’s meant to pull the player in, motivate them to play more, and get them hooked with their first 20 events pushing them up the charts. It becomes almost Pavlovian. Typically you won’t see a new player plateau for years as they continue to push out their lowest value event and replace it with a higher scoring event.

These players are the backbone of what makes WPPR successful. This gives WPPR’s the importance in the world. These players don’t care whether they are actually ranked 4028th in the world. They just know that 19 months ago they just started, and they saw this path from 30,000th to 22,640th to 17,267th to 13,426th and up and up they go. That’s the joyride they get out of the system.

The negative impact on that same player enjoying the ride up the rankings when put into a skill based system means someone like my mom starts playing, gets thrown in at some ‘initial level’. As she plays she moves down the rankings, then down some more, then down some more, until the idea of the ranking system doesn’t just not add value to her playing experience, it’s a DETRIMENT to her playing experience.

On the higher end player scale, I enjoy “casually competing” in events where I stand to gain zero WPPR value out of it. Maybe I’ll have a beer . …or two . . . or three when playing, because that particular event means nothing to me. A system that isn’t positive only takes away the opportunity to casually compete. I’m left with either NOT entering events, maybe I don’t throw in that random PAPA Classics entry because I don’t feel like finishing 40th. Maybe I stop going to my monthly local bar event because I don’t feel like taking it “seriously”. To me that’s a big negative side effect of switching to a non positive-only system.

There’s far more at play here than creating accurate rankings . . .